[1] Essentialism:

[1] Essentialism:

In Plato’s Phaedrus, Socrates declares that speech bears a closer relation to thought than writing. Like any uncompromising tutor, Socrates insists that Phaedrus recount his morning conversation with the orator Lysias on the subject of love without any textual aid. Phaedrus made to remove his written notes from that morning’s conversation, and Socrates admonished him for doing so. Such an admonishment may have been disconcerting to Phaedrus, but as Socrates reasons, recounting things as they happened could only be of benefit to working memory.

Later, in the same dialogue, Socrates relates to the young Phaedrus a curious legend regarding the Egyptian king Thamus. The god Theuth had come to Thamus with the invention of writing, so that the Egyptian people could enjoy this gift from their beneficent king. However, Thamus concluded that his people would be much better off without writing, because such an invention would not improve the memory of his people, but do the opposite. Thamus responds: “The specific which you have discovered is an aid not to memory, but to reminiscence, and you give your disciples not truth, but only the semblance of truth.” Therefore, Socrates famously rejected the “technological” innovation of writing. Writing was meant to be a secondary method to the methods and insights of face-to-face dialogue (i.e.: the Socratic method).

Ironically, Western civilization has taken its literate heritage from Greek philosophy. Even Plato intended writing to be secondary to logically constructed thought and speech. But for both Plato and Socrates, genuine knowledge consisted of what was unchanging and eternal. For instance, there could be no “true” knowledge of a particular river, but only of the pure Idea (or eidos) of “river.” From this literary heritage – written down in words no less – models and laws were affixed to the processes of learning, and were aided by the Enlightenment. This has done much to separate man from the natural world (it has been argued that Plato’s popularly-held conception of eidos has contributed profoundly to civilization’s distrust of nature as well as bodily, sensorial experience; see quotation below), but it has also led (perhaps unintentionally) to an essentialist view of literacy in education. This is the idea that there is only one meaning (one eidos, if you will) and the goal of education is to convey that meaning across cultures.

“. . . the process of learning to read and to write with the alphabet engenders a new, profoundly reflexive, sense of self. The capacity to view and even to dialogue with one’s own words after writing them down, or even in the process of writing them down, enables a new sense of autonomy and independence from others, and even from the sensuous surroundings that had earlier been one’s constant interlocutor. The fact that one’s scripted words can be returned to and pondered at any time that one chooses, regardless of when, or in what situation, they were first recorded, grants a timeless quality to this new reflective self, a sense of the relative independence of one’s verbal, speaking self from the breathing body with its shifting needs. The literate self cannot help but feel its own transcendence and timelessness relative to the fleeting world of corporeal experience.” From The Spell of the Sensuous by David Abram

[2] A word on pedagogy:

Traditional pedagogy adopted essentialism not based on philosophical antecedents alone, but also because essentialism went hand-in-hand with the whole idea of national progress. In Ken Robinson’s words, education was “conceived in the intellectual culture of the Enlightenment and in the economic circumstances of the Industrial Revolution.” Public education was no doubt revolutionary for its time and place, but society and culture have changed dramatically in the last sixty years, and education has tarried behind. Even today, education is based on league tables, standardized test scores, and examinations. The standardizing of public education is done to achieve a capital outlay, so to speak (the proper term is performance pay), with returns so poor that a teacher’s salary often suffers. But even with the advancements in technology, the ideal to maximize efficiency is given a new edge. Indeed, the emphasis on standard expectations readily identifies individuals who are not performing “efficiently” in comparison with their peers. Furthermore, if the fundamental purpose of education is “to ensure that all students benefit from learning in ways that allow them to participate fully in public, community, and economic life,” then even the New London Group is distracted from the current reality. It is impossible to predict with accuracy what things will look like publicly, communally, and especially economically in the future:

“The purpose of education is often said to be to equip young people with skills ‘for the real world’, as if there were – in some dimension of genuine reality – such a thing, to be known so indubitably that we could stipulate what skills might be required to deal with it, rather than, inevitably, interpretations of and perspectives on it.” In “Poststructuralism, Postmodernism, and Education” by Richard Smith

[3] Singularity:

[3] Singularity:

Marc Prensky is responsible for coining the term “digital native,” which has been used in the work of John Palfrey and Urs Gasser (see my previous post). While the exhalation of distress over the decline in the US education system issues forth from teachers, political pundits, school board members etc., Prensky steps back to make an obvious claim: “Today’s students are no longer the people our educational system was designed to teach.” The rapid takeover of digital technology in every area of life has led to a “singularity;” an event in which new trends are no longer simply reformulations of the old. In understanding nascent technologies, it is often the young who are leading the way, and adults are anxiously trailing behind. In education, models of learning are immutable because curriculum’s have been devised by digital immigrants (or teachers with little experience with computers outside using email clients or academic domains) who “know better.” Indeed, the student who grew up on technology can no longer maintain interest in static lesson plans with no imagery or graphics. We are often disparaged by digital immigrant instructors who converse in an academic argot that is not in tune with our memes, colloquialisms, and digitally lexical interests. This often leads to a “those kids mentality” on the part of the instructors, who give up on helping students, because students “just don’t care to engage.”

Still, there may be good reason for teachers to be so disparaging. Prensky notes that “today’s students think and process information fundamentally differently from their predecessors.” There is nothing wrong with processing information differently, per se. But there is evidence to suggest that digital natives are negatively shaped by emerging technologies, both biologically and neurologically. For instance, the renowned scientist Susan Greenfield believes that the “modern” world is altering our human identity. With the influence of emerging technologies, Greenfield notes that “[a]ttention spans are shorter, personal communication skills are reduced and there’s a marked reduction in the ability to think abstractly.” More startling is the apparent use of technology among those just beginning to bud into reciprocative persons. The effects of technology on developing brains obviously goes deep. It is no wonder then that digital natives engage so masterfully with new media, even if it is at the expense of their memory and cognitive capacity:

“We could be raising a hedonistic generation who live only in the thrill of the computer-generated moment, and are in distinct danger of detaching themselves from what the rest of us would consider the real world.” – Susan Greenfield

[4] Post-structuralism:

In 1979, Jean-François Lyotard published The Postmodern Condition. In it, Lyotard states that, “the miniaturisation and commercialisation of machines is already changing the way in which learning is acquired, classified, made available, and exploited. . . The old principle that the acquisition of knowledge is indissociable from the training (Bildung) of minds, or even of individuals, is becoming obsolete and will become ever more so.” Here, I think, are some rather prescient comments about the influence of computers on learning and knowledge in a postindustrial and postmodern society. We have already seen how schooling was predicated on industrialization (and before that, on a classical Greek variant of essentialism), but when new digital media takes over the classroom, the first shift in any pedagogical organization comes in the form of a linguistic paradigm of mediation. The research team of the Upper Yarra Community House have demonstrated in their New Literacies Project that being literate now means being able to “decode written text, understand and compose meaningful texts, use texts functionally and analyze texts critically.” Essentially, digitally written communications are now characterized by morphing sets of graphemes, spellings, hypertexts, etc. In other words, new semiotic systems are evolving every day, and it is becoming increasingly important to rein these in to analyze and interpret their content.

In Sherry Turkle’s book Life on the Screen, Turkle notes that “the mechanical engines of computers have been grounding the radically nonmechanical philosophy of postmodernism.” She relates an anecdote where a student of hers did not understand Jacques Derrida‘s theory of deconstruction until an early Macintosh computer showed him stacks of hypertext. With hypertext, suddenly the student was made aware of the multiplicity of possible meanings hidden behind a single text. For the purposes of this essay, it will be best not to get too caught up in post-structural concepts. But the mission of education in the 21st century, as outlined by The New London Group and others, is to strip away the monocultural and monolingual monopoly on texts. This makes room for diversity, creativity and individuality. As Richard Smith puts it, “Postmodern knowledge refines our sensitivity to differences and reinforces our ability to tolerate the incommensurable.” These new measures, however, are still interconnected with the technological.

In some theoretical circles, there is an interesting discussion about the end of postmodernism, which I view as very positive, but have no hope of seeing, because this discussion involves radical ethics that do not comport well with the current political reality. Still, what I think schools are lacking is a proper critical distancing from technology, not just in the Philosophy department, but in all departments (I am not even going to broach K-12. I will just suffice to say that I think primary school should be based on creative play and fundamental learning, whereas secondary school should be more focused on abstract thinking; neither mediated by technology). My class on digital writing began with figures like Howard Rheingold, Guy Debord, Michel Foucault, and Jean Baudrillard, so for my purposes, I am relatively pacified. But I still perceive an implicit acceptance of technology (in computer-oriented classrooms), even despite some critical engagement with theorists.

“The ideology of those who desire to be wired and who see the Internet as the experimental grounds for allegedly heterogeneous experiments with alternative subject-positions is integral to the political economy of bio-technological capitalism.” – Rosi Braidotti

[5] Psychê:

I have earnestly written about my interaction with new digital media elsewhere, so I don’t think I need to say much more about my personal feelings toward emerging technologies. I am cautious, if not downright dismissive of most technology. This is mainly due to my interest and reading in the philosophy of self, continental phenomenology, and deep ecology. I view the techno-capitalist world in which we live as inherently destructive, and have a hard time reconciling myself to the idea that technology is meant to be an integral part of human experience. When it comes to social media, I echo figures like Noam Chomsky, who maintain that social media conditions people to think and act superficially, with little or no serious thought or reflection. Still, I am not ignorant of the benefits technology has brought to society. New media websites that are participatory are quite different from the top-down mass medias of which intellectuals like Chomsky disapprove. For instance, the pressures put on Mubarak during the Arab Spring offers a counterexample to media critics about the power of social networking. However, restrictive regimes are still finding a way to “pull the plug,” so to speak, from a united and democratic citizenry. This returns the entire conversation back to critical theory, in which what is panoptic in any governmental system needs to be defined and checked for abuses.

In roughly 370 BC, Plato defined the soul as being the core essence of any living being. The process of writing can, at best then, only capture elements of one’s own “soul” or personhood. What one puts down in writing today, unless reflected on ad infinitum, will mostly be lost or fragmented in the days that follow. Indeed, what is written down will have to be continuously referred to by the writer (maybe not so much the rare savant) in order to deliberate its full weight and meaning. If this is true, then Socrates was not all that insane. In fact, I think he was correct to suggest that writing was only an aid to reminiscence. In other words, writing is very self-reflexive and has nothing to do with essences or what makes a person who they really are. I think that is why the great writers have often been lost to themselves. For instance, Franz Kafka burned 90 percent of his manuscripts. Virginia Woolf, Ernest Hemingway, and Hunter S. Thompson all chose to kill themselves. At any rate, writing is, of course, a skill that has a lot of value and virtue in the arts. Digital writing can have the same value, so long as digital literacy is taught as an adjunct to traditional forms of literacy. But to foster a truly enlightened citizenry, individuals need to find happiness in the skills that they learn, and these skills need to be relevant socially, publicly, and economically. That is why I like Ken Robinson so much, because he understands the need for creativity in developing a productive, vivacious society.

So much of the University experience is not productive or vivacious. It is rote and unappealing. As an undergraduate in the arts, I am often lectured on enlightenment ideals. This is not a surprise, since I am a history major. But the lectures my professors give are not the problem; it is the delivery of their lectures that leaves something to be desired. It may seem that, based on what I have written here, I am all for purely lecture and discussion-driven teaching. However, I think this really only works in close-knit discussion groups on subjects where individuals share a mutual passion. Given the preceding discussion on digital natives, University teachers should give visual lectures just as must as verbal ones. Only one out of my four history professors thus far in my University experience has decided to do this. The others have disparaged media in the classroom (and the students who have dared to use it). As a digital native (albeit a strange one), I feel that I can do better teaching myself, and so I focus as much as I can on reading what interests me. Still, the standards are set, and the drudgery continues. Maybe a little bit of video games to take my mind off things?

So much of the University experience is not productive or vivacious. It is rote and unappealing. As an undergraduate in the arts, I am often lectured on enlightenment ideals. This is not a surprise, since I am a history major. But the lectures my professors give are not the problem; it is the delivery of their lectures that leaves something to be desired. It may seem that, based on what I have written here, I am all for purely lecture and discussion-driven teaching. However, I think this really only works in close-knit discussion groups on subjects where individuals share a mutual passion. Given the preceding discussion on digital natives, University teachers should give visual lectures just as must as verbal ones. Only one out of my four history professors thus far in my University experience has decided to do this. The others have disparaged media in the classroom (and the students who have dared to use it). As a digital native (albeit a strange one), I feel that I can do better teaching myself, and so I focus as much as I can on reading what interests me. Still, the standards are set, and the drudgery continues. Maybe a little bit of video games to take my mind off things?

“I have maintained a passionate interest in education, which leads me occasionally to make foolish and ill-considered remarks alleging that not everything is well in our schools. My main concern is that an over-emphasis on testing and league tables has led to a lack of time and freedom for a true, imaginative and humane engagement with literature.” – Philip Pullman

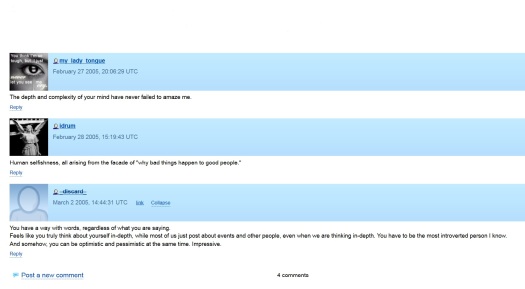

As the final requirement for a course I took on Writing for New Media, I was made to develop a forward-looking strategy for my online social media presence. Writing for New Media was basically an introductory course to Web authoring, but not in the technical sense of the term. Since the course was designed by the Writing and Rhetoric department at Oakland University, emphasis was on social media theory and digital literacy; not desktop publishing (like HTML coding or graphic design). The intention, at the end, was to understand how to develop and maintain an academic or professional Web presence. My Google Sites website above was made for that express purpose. While I am aware that some of my interests and cultural references linked through this site may not be agreeable to all parties, it is my hope that I project an honest digital representation of who I am and aspire to be. Part of accomplishing this is realizing that my social networks need to be appropriate for everyone I can possibly imagine viewing them.

As the final requirement for a course I took on Writing for New Media, I was made to develop a forward-looking strategy for my online social media presence. Writing for New Media was basically an introductory course to Web authoring, but not in the technical sense of the term. Since the course was designed by the Writing and Rhetoric department at Oakland University, emphasis was on social media theory and digital literacy; not desktop publishing (like HTML coding or graphic design). The intention, at the end, was to understand how to develop and maintain an academic or professional Web presence. My Google Sites website above was made for that express purpose. While I am aware that some of my interests and cultural references linked through this site may not be agreeable to all parties, it is my hope that I project an honest digital representation of who I am and aspire to be. Part of accomplishing this is realizing that my social networks need to be appropriate for everyone I can possibly imagine viewing them.